Can AI really copy a brand like Liquid Death?

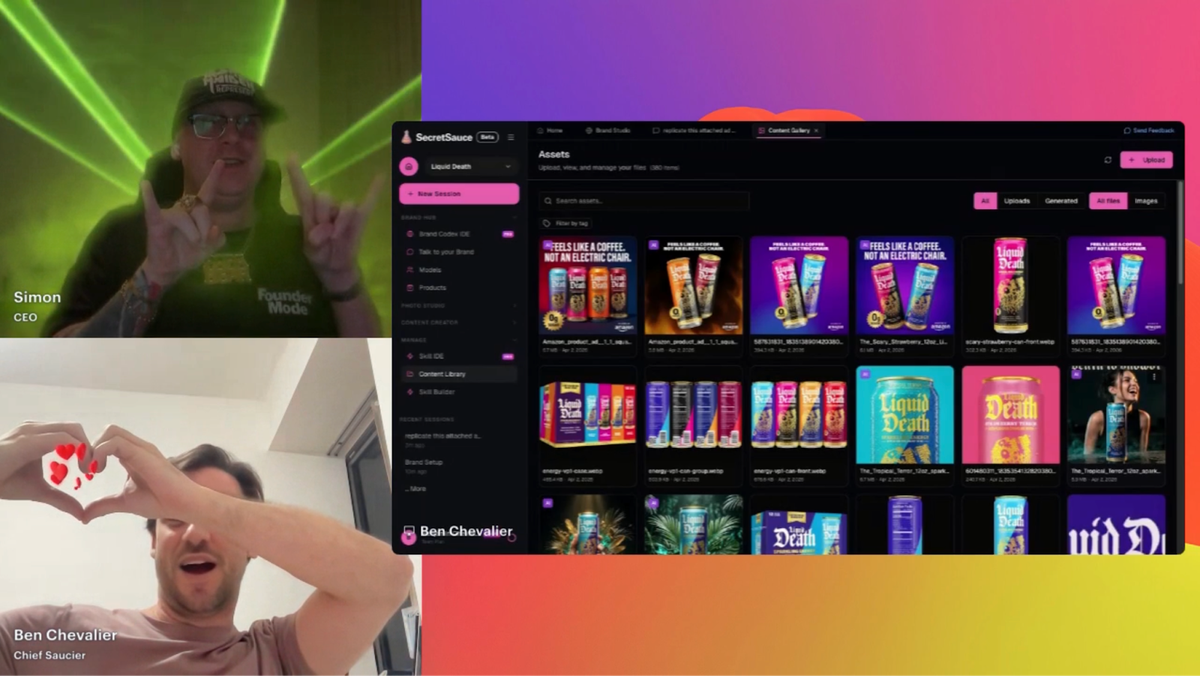

Last week, Ben and I went live in front of about 100 people and tried to replicate one of the most distinctive brands in the world using SecretSauce. We went in blind with a brand we'd never worked with, in front of a live audience.

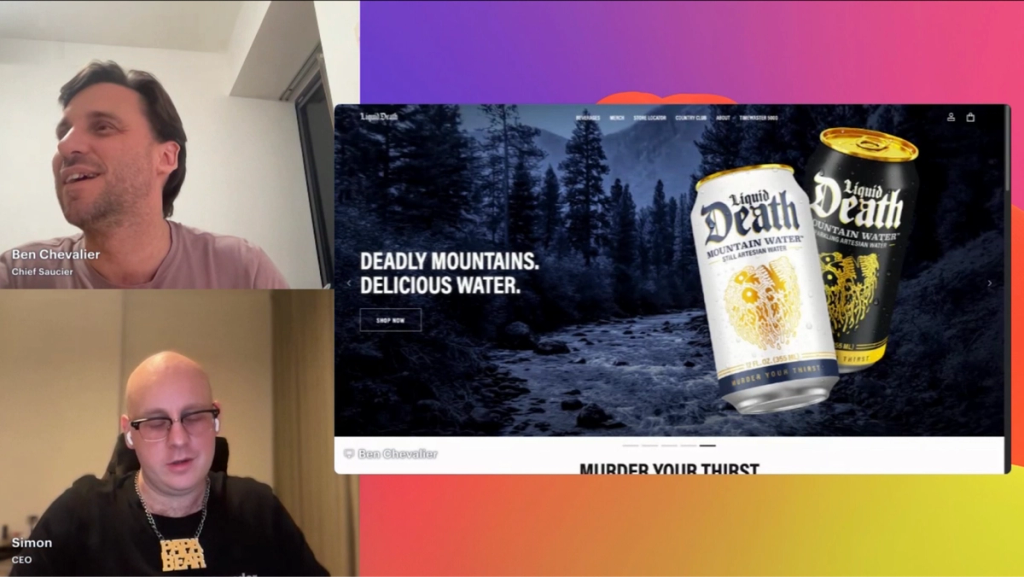

We picked Liquid Death because it's genuinely hard to copy. The CEO came from X Games marketing. He wanted something you could look cool holding that was just water, and he pulled it off by building a brand with a very specific atmosphere: horror-movie visuals, irreverent humor, intricate product design, a tone of voice that's somewhere between a metal band and a stand-up comedian. The whole thing only works if every piece of it is consistent.

We had a theory about the one thing that would make the difference. If it worked on Liquid Death, it would work on any brand. If it couldn't, we'd find out live in front of 100 people.

Most AI tools solve the easy layer, brand memory is about the hard layer

The easy branding layer is colors, fonts, and a logo. Any AI tool that can read a website can pull those. They're the things you can point at.

The hard branding layer is everything else. Ben described it during the session: you look at Liquid Death's Instagram and it's horror movie-ish, but you don't necessarily always see the logo in the content. You have the can, which is very specific, but the rest is colors and atmospheric treatments. You have to see it to feel it. You can't just say "use that color, use that color, put blue.” That gets you nowhere near the actual brand.

That's the problem most AI content tools never actually solve - keeping content truly on-brand beyond basic brand kits. They store the easy layer and call it a brand kit. You get consistent logos and consistent color palettes, and then completely inconsistent everything else. Because the atmospheric, tonal, compositional decisions that make a brand feel like itself aren't encoded anywhere.

SecretSauce’s Brand Brain is built to capture the hard layer, the part most AI tools miss when trying to keep content consistent. The Liquid Death experiment was designed to find out how well.

What brand learning actually looks like

Ben dropped the Liquid Death URL into SecretSauce. The platform crawled the site and pulled colors, fonts, logo variants, product details, and copy. Then it built a Brand Brain.

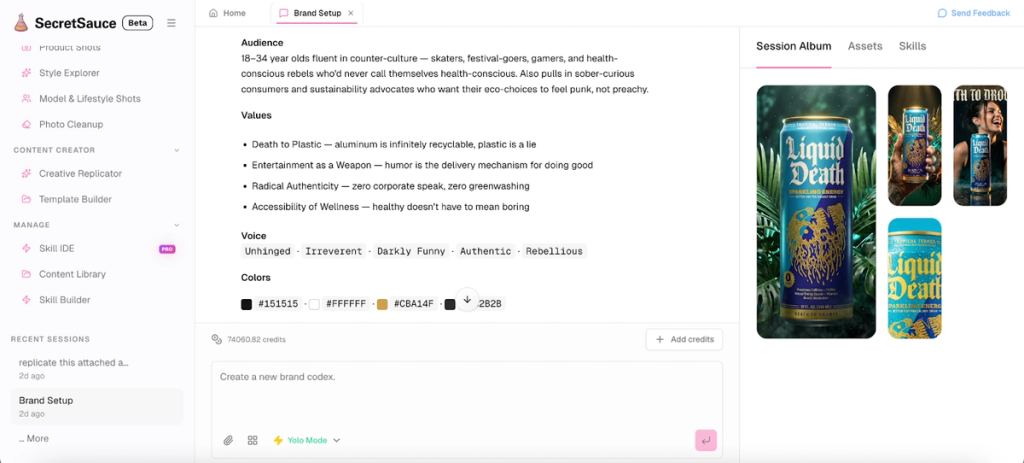

The Brand Brain is a structured set of rules: verbal identity with specific keywords and banned words, logo usage guidelines across background types, typographic hierarchy, audience definition, content guardrails.

For Liquid Death, it automatically surfaced that the brand’s headlines should be all caps. It flagged words the brand doesn't use. It identified three or four distinct fonts on the can and understood the context for each one.

And it described the brand voice as "unhinged, irreverent, funny, authentic, rebellious.” Because the platform read the brand and understood it. Ben didn’t type any of that.

My reaction watching this live: "It's nailed it. (Phew)."

Ben's framing of what Brand Brain makes possible: the platform is learning how the brand is supposed to be treated. That distinction (treated, rather than referenced) is the one that matters.

Most tools reference a brand. Our Brand Brain learns how to treat it - which is the difference between content that looks right and content that actually stays consistent.

The output that proved the point

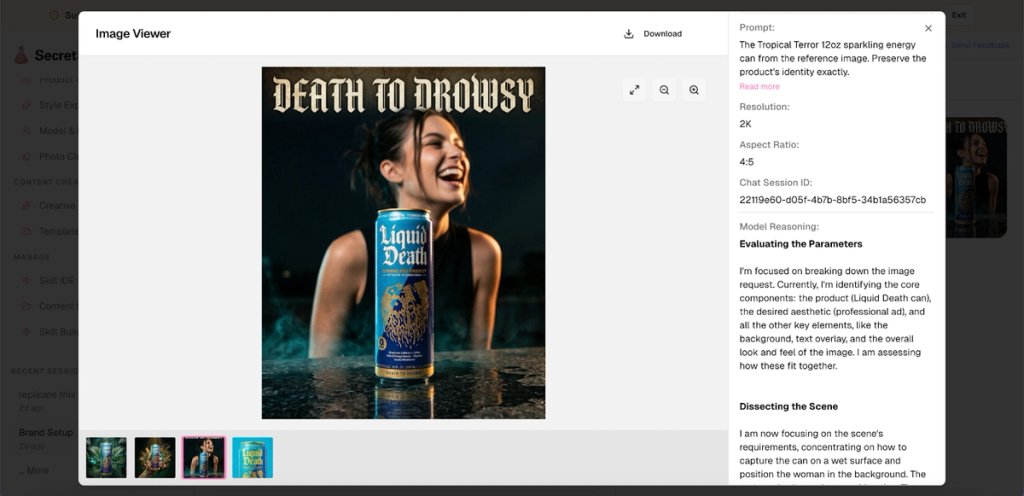

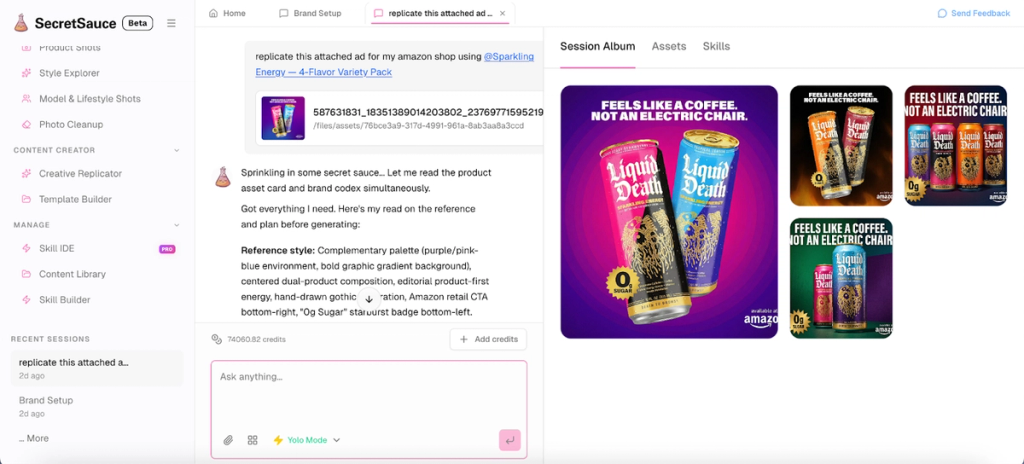

Ben asked for two Instagram assets for Tropical Terror, one of Liquid Death's new energy drink SKUs. The prompt was conversational like you see below.

The platform knew the Instagram dimensions automatically. It pulled the Tropical Terror product card it had built during ingestion, including product details, CTAs, and associated copy. Then it made creative decisions: dark forest background, volcanic rocks, foggy atmosphere, cracked and dry soil texture. Horror meets tropical. Then it placed the tagline. "Death to Drowsy" correctly, in the right typographic treatment, without being asked.

Someone in the live comments said: “It’s scary good.”

That's the moment the test was really decided. The Brand Brain encoded the tagline as a brand truth during ingestion and applied it when relevant, the way a creative director who actually knows the brand would.

I said to the audience that I wouldn't know this was AI.

Why the reasoning step is the product

At one point during the session, I asked Ben why generation takes longer with SecretSauce than with a basic AI image tool. His answer explains the whole architecture.

The majority of the processing time is reasoning. Before the platform generates anything, it reads everything it knows about the brand, the product, the user's preferences, and the requested format. It works through all of it before it acts, which is what allows the output to land much closer to what the brand actually needs.

I liked Ben's analogy: when you work with another person, the more context you give them, the better their decisions. The Brand Brain holds all the context permanently and reasons through it before generating.

That reasoning step is the Brand Brain working. It's what makes it possible to get outputs right on the first try. You ask for an asset in a few words and get something publish-ready because the platform already holds everything it needs to make a good decision.

The alternative is what we called the prompt lottery. With a generic AI image tool, you describe your brand from scratch every time and hope the output lands. Sometimes it does, mostly it doesn't, and you spend more time fixing and re-prompting than you would have spent doing it yourself. The lottery is a structural problem: the tool has no memory, so every generation starts from zero.

The Brand Brain eliminates the lottery by building the brief into the system.

The honest version of how the demo went

The assets were good. Really good. Colors nailed, fonts matched, brand atmosphere intact, tagline placed correctly.

At one point Ben flagged that one output read more like an energy drink than water and was about to call it a mistake. Then we looked closer. The platform had used the caffeinated Liquid Death SKU and written the right copy for it. It wasn't wrong, we were. Ben appropriately called himself fake news.

The final Amazon ad was generated in the asset library rather than appearing in the chat window, which gave us a brief moment of thinking it hadn't worked. It had. Ben said it best: "We're still in beta. It's only going to get better."

My version: this is the worst the product will ever be.

What the Liquid Death test tells us

The point was never about Liquid Death specifically. It was about whether AI could learn a brand this opinionated, this atmospheric, this intricate, built over years, and reproduce it faithfully without being re-explained every time.

If it can do that with Liquid Death, it can do it with yours.

Whether you're a marketing team where every new asset starts from scratch because the brief lives outside the tools or a solopreneur who's been building a brand informally through the way you talk about your product, the problem is the same. Brand knowledge needs to live inside the system that produces content if you want AI to consistently reflect the brand. Once it does, consistency stops being something you enforce and starts being something that just happens.

Liquid Death took years to build something that feels completely, unmistakably like itself. The test last week was whether AI could learn that in minutes and produce content worthy of it. It could. That's what brand memory is supposed to do.

Want to see what SecretSauce does with your brand? Try it at trysecretsauce.ai.